Willingness to pay is often cited as the predominant framework used to set pricing for your SaaS — and more broadly, XaaS — products

August 2022

Author: Bryan Belanger

August 2022

Author: Bryan Belanger

Willingness to pay is a simple economic concept that refers to the maximum price a customer is willing to pay for a given product or service offering.

Why does WTP matter? Willingness to pay is often cited as the predominant framework used to set pricing for your SaaS — and more broadly, XaaS — products. If you’re a generalist SaaS product marketer, pricing specialist within a product marketing team, or dedicated pricing strategy leader, you’ve likely explored, encountered or used WTP research methods to set a pricing strategy.

WTP research is often executed prior to the launch of a new or updated product or service offering. The intent of research at this stage is to set or refine list pricing that is planned for the new product or service. While this is the most common impetus, companies with more mature pricing programs also conduct willingness-to-pay research on a quarterly, semiannual or annual basis to understand changes in the price sensitivity for existing offerings.

ProfitWell, through its Price Intelligently service, has emerged as a SaaS industry authority on pricing. Price Intelligently relies on a proprietary approach that is based on willingness-to-pay research (more on this in the next section below).

WTP is an invaluable concept and can be efficiently deployed in pricing research. Willingness-to-pay research can quickly yield tactical, tangible data on pricing and customer profiles that can be put into practice.

While these factors make WTP a key element in your pricing strategy arsenal, there are important drawbacks to consider when employing it in your price setting program.

First, it’s important to have a shared understanding of how willingness-to-pay information is collected. Typically, collecting WTP data involves customer research.

Customer research can take many forms. Some of the key elements that might guide your analysis include:

All of these factors will inform your selected approaches for customer research as well as the methodologies you choose to design that research. The goals and constraints of your customer research effort will help you determine whether you should use internal research, external research (with a third-party provider), or a hybrid approach, and whether you should employ quantitative methods, qualitative methods, or a mix of the two.

In our Ultimate Guide to Conducting SaaS Pricing Research, we touched on these trade-offs, constraints, approaches and methods in the general context of conducting SaaS pricing research. The following are the key methodologies that can be used to collect customer research, including willingness-to-pay insights:

Peter Van Westendorp was a Dutch economist who invented something called the Van Westendorp Price Sensitivity Meter (PSM).

The crux of the methodology involves asking the following four questions in customer research. Any of the methodologies we outlined previously can be used to ask these questions. However, most practitioners structure an online survey to field Van Westendorp research, with structured segmentation of the research fielding to defined customer segments.

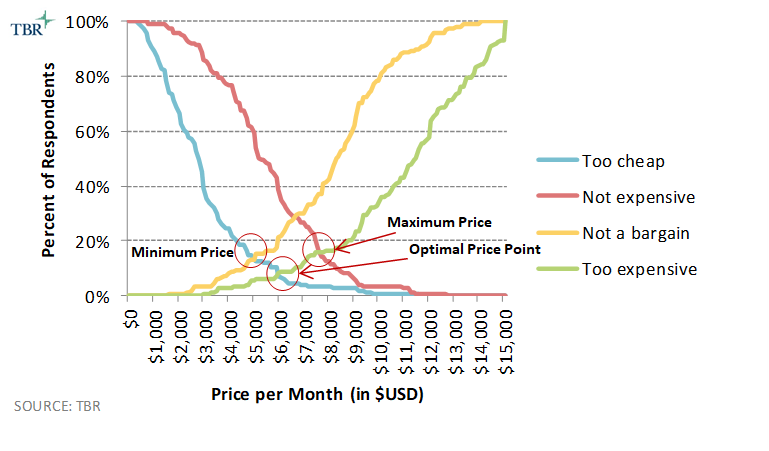

You then plot the distribution of responses to each of those questions on a graph, calculating the following:

The result looks something like the graph below, which is an actual example output from a recent study we completed using this methodology. The methodology provides a minimum acceptable price (also called the “point of marginal cheapness”), a maximum acceptable price (“point of marginal expensiveness”), and the optimal price point (OPP). Typically, those using this methodology view the range of prices between the minimum and maximum price as the acceptable price range for the product or service.

This approach is the foundation of ProfitWell’s Price Intelligently, which many consider the authority on SaaS pricing. ProfitWell is not alone, either; most in the SaaS industry espouse Van Westendorp as the go-to methodology for determining pricing. This article, for example, suggests that Van Westendorp has become popular among Silicon Valley-based companies and startups.

Van Westendorp has notable benefits. It’s easy to understand and simple to deploy. It provides a clear “answer.” It’s repeatable. It can be combined with other survey approaches to gather insights on pricing models and packaging through asking questions on relative preference of features associated with the products that are being tested. We use Van Westendorp in the same way that ProfitWell and others do.

While Van Westendorp is a critical part of a product marketer or pricing strategist’s arsenal, there are risks in solely relying on Van Westendorp to inform your product pricing.

There are overarching drawbacks to relying solely on Van Westendorp, as well as tactical issues that arise in the implementation of the methodology.

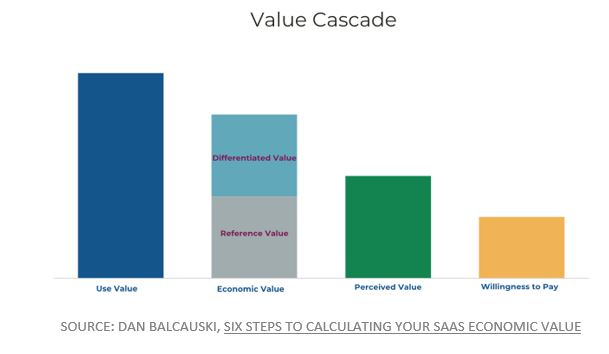

We’ve referenced our friend Dan Balcauski’s posts a few times, including this latest one on calculating the economic value of your SaaS offering.

In a recent post, he walks us through the Value Cascade, which he adapted from the Thomas Nagle book “The Strategy and Tactics of Pricing.” Borrowing this image from Dan to illustrate the value cascade:

Here’s where the big picture issue with Van Westendorp comes in: There’s a tendency to jump right into Van Westendorp to set pricing for a SaaS offering. You can run a study in a few weeks, crunch the results, and have answers on what your pricing should be. That approach, however, negates the quantification of value, a process that Balcauski defines in detail in his posts. That process includes assessing competitive alternatives and the value differentiation your product provides. If value is incorrectly defined — or worse, not defined — that skews the implementation of willingness-to-pay research, which in turn skews the results of your study.

In practice, there are also several tactical issues that crop up with Van Westendorp studies. In our experience, these have included:

To reiterate our earlier message, the first step in designing a better pricing research framework is to ensure you aren’t putting the cart before the horse. Meaning what? Make sure you aren’t diving directly into willingness to pay without defining and measuring value using a process like Balcauski outlines in his blog.

This process involves careful consideration of competitive alternatives during the “calculating economic value” stage, which is where a platform like XaaS Pricing can help. This process also involves using the types of customer research outlined in this post to define and quantify value. A deep dive on that topic is beyond the scope of this post, but research isn’t just for price setting — don’t discount the importance of customer research in defining value. For that, we love customer interviews.

Let’s assume you’ve moved through those steps of defining value, including looking at competitors. Let’s also assume you’ve done the blocking and tackling of defining goals and there is a specific outcome for the pricing exercise you’re working on. Now you’ve arrived at the stage of establishing willingness to pay, and you have to select the appropriate research framework for gathering customer data.

We aren’t going to offer one-size-fits-all frameworks or tools on the exact methodology you should use once you get to this stage. It’s going to depend on the specifics of your situation, which can include both externalities as well as real internal factors that may limit your approach (for example, maybe you just don’t know how to program and run a survey, and don’t have the budget to hire someone to do it). But we can offer a few rules of thumb that have served us well when doing this type of research for the past 10-plus years:

©2022 XaaS Pricing. All rights reserved. Terms of Service | Website Maintained by Tidal Media Group

©2022 XaaS Pricing. All rights reserved. Terms of Service | Website Maintained by Tidal Media Group

The temptation of SaaS pricing hacks — and the reality

The temptation of SaaS pricing hacks — and the reality